BonsaiDb Commerce Benchmark

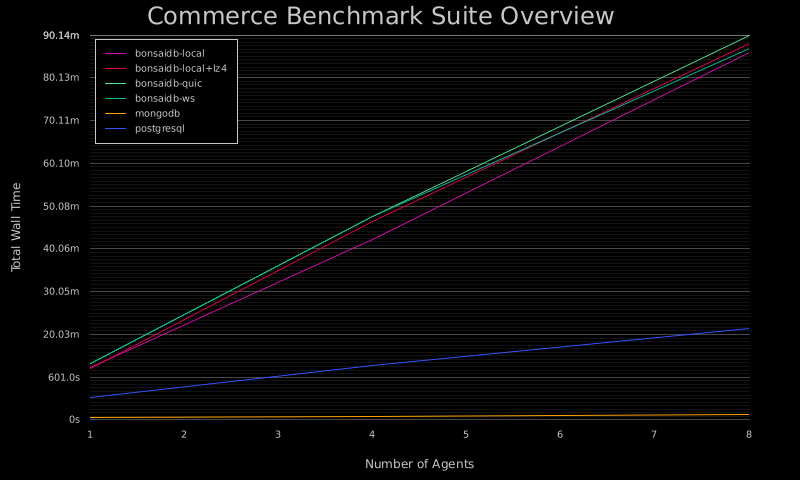

This benchmark suite is designed to simulate the types of loads that an ecommerce application might see under different levels of concurrency and traffic patterns. As with all benchmark suites, the results should not be taken as proof that any datbase may or may not perform for any particular application. Each application's needs differ greatly, and this benchmark is designed at helping BonsaiDb's developers notice areas for improvement.

| Dataset Size | Traffic Pattern | Concurrency | bonsaidb-local | bonsaidb-local+lz4 | bonsaidb-quic | bonsaidb-ws | mongodb | postgresql | Report |

|---|---|---|---|---|---|---|---|---|---|

| small | balanced | 1 | 20.91s | 20.63s | 24.88s | 24.39s | 3.095s | 7.625s | Full Report |

| small | balanced | 4 | 72.76s | 72.59s | 75.43s | 68.86s | 4.024s | 12.11s | Full Report |

| small | balanced | 8 | 135.3s | 137.7s | 137.1s | 139.3s | 7.098s | 14.54s | Full Report |

| small | readheavy | 1 | 8.958s | 9.509s | 12.55s | 11.53s | 1.994s | 4.042s | Full Report |

| small | readheavy | 4 | 29.68s | 29.54s | 29.54s | 28.94s | 3.429s | 5.603s | Full Report |

| small | readheavy | 8 | 59.08s | 59.28s | 57.13s | 58.66s | 4.962s | 6.646s | Full Report |

| small | writeheavy | 1 | 119.0s | 120.9s | 131.4s | 127.7s | 7.768s | 46.90s | Full Report |

| small | writeheavy | 4 | 533.7s | 784.1s | 815.1s | 814.1s | 9.640s | 191.7s | Full Report |

| small | writeheavy | 8 | 24.07m | 24.52m | 25.58m | 24.41m | 17.64s | 337.3s | Full Report |

| medium | balanced | 1 | 44.08s | 43.17s | 50.46s | 48.19s | 3.026s | 16.14s | Full Report |

| medium | balanced | 4 | 156.9s | 153.6s | 147.2s | 158.8s | 4.338s | 27.76s | Full Report |

| medium | balanced | 8 | 315.9s | 292.2s | 293.8s | 312.1s | 5.915s | 28.17s | Full Report |

| medium | readheavy | 1 | 16.94s | 16.62s | 20.12s | 19.27s | 2.716s | 8.712s | Full Report |

| medium | readheavy | 4 | 60.93s | 62.73s | 59.80s | 60.40s | 3.832s | 11.48s | Full Report |

| medium | readheavy | 8 | 118.4s | 122.4s | 118.2s | 133.7s | 5.102s | 12.51s | Full Report |

| medium | writeheavy | 1 | 259.2s | 247.2s | 269.9s | 272.6s | 7.630s | 89.08s | Full Report |

| medium | writeheavy | 4 | 787.5s | 784.8s | 801.2s | 798.7s | 9.846s | 206.4s | Full Report |

| medium | writeheavy | 8 | 23.44m | 24.86m | 24.40m | 23.89m | 16.06s | 391.7s | Full Report |

| large | balanced | 1 | 42.73s | 43.91s | 47.50s | 55.07s | 2.958s | 28.05s | Full Report |

| large | balanced | 4 | 146.6s | 145.3s | 149.6s | 148.8s | 4.324s | 33.39s | Full Report |

| large | balanced | 8 | 290.8s | 320.2s | 292.3s | 288.0s | 6.815s | 37.65s | Full Report |

| large | readheavy | 1 | 18.09s | 19.20s | 20.88s | 20.71s | 2.372s | 17.99s | Full Report |

| large | readheavy | 4 | 58.92s | 57.44s | 57.30s | 58.89s | 3.317s | 22.60s | Full Report |

| large | readheavy | 8 | 108.2s | 107.6s | 105.1s | 104.6s | 4.138s | 21.23s | Full Report |

| large | writeheavy | 1 | 214.3s | 207.6s | 220.8s | 216.8s | 7.846s | 102.7s | Full Report |

| large | writeheavy | 4 | 694.2s | 697.0s | 728.2s | 727.8s | 9.994s | 255.2s | Full Report |

| large | writeheavy | 8 | 21.34m | 21.51m | 23.44m | 21.31m | 15.85s | 430.7s | Full Report |

Dataset Sizes

The three dataset sizes are named "small", "medium", and "large". All databases being benchmarked can handle much larger dataset sizes than "large", but it is impractical at this time to run larger benchmarks on a regular basis. Each run's individual page will show the initial data set breakdown by type.

Traffic Patterns

This suite uses a probability-based system to generate plans for agents to process concurrently. These plans operate in a "funnel" pattern of searching, adding to cart, checking out, and reviewing the purchased items. Each stage in this funnel is assigned a probabilty, and these probabilities are tweaked to simulate read-heavy traffic patterns that perform more searches than purchasing, write-heavy traffic patterns where most plans result in purchasing and reviewing the products, and a balanced traffic pattern that is meant to simulate moderate amount of write traffic.

Concurrency

The suite is configured to run the plans up to three times, depending on the number of CPU cores present: 1 agent, 1 agent per core, and 2 agents per core.